Publications

2023

-

Lessons from COVID-19 for rescalable data collectionSangeeta Bhatia, Natsuko Imai, Oliver J. Watson, and 12 more authorsThe Lancet Infectious Diseases, May 2023

Lessons from COVID-19 for rescalable data collectionSangeeta Bhatia, Natsuko Imai, Oliver J. Watson, and 12 more authorsThe Lancet Infectious Diseases, May 2023Novel data and analyses have had an important role in informing the public health response to the COVID-19 pandemic. Existing surveillance systems were scaled up, and in some instances new systems were developed to meet the challenges posed by the magnitude of the pandemic. We describe the routine and novel data that were used to address urgent public health questions during the pandemic, underscore the challenges in sustainability and equity in data generation, and highlight key lessons learnt for designing scalable data collection systems to support decision making during a public health crisis. As countries emerge from the acute phase of the pandemic, COVID-19 surveillance systems are being scaled down. However, SARS-CoV-2 resurgence remains a threat to global health security; therefore, a minimal cost-effective system needs to remain active that can be rapidly scaled up if necessary. We propose that a retrospective evaluation to identify the cost-benefit profile of the various data streams collected during the pandemic should be on the scientific research agenda.

-

Understanding COVID-19 reporting behaviour to support political decision-making: a retrospective cross-sectional study of COVID-19 data reported to WHOAuss Abbood, Alexander Ullrich, and Luisa A DenkelBMJ Open, Jan 2023

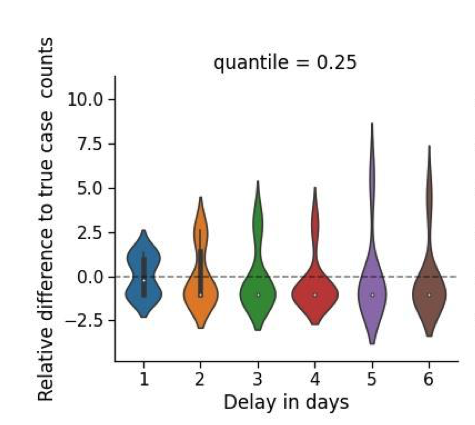

Understanding COVID-19 reporting behaviour to support political decision-making: a retrospective cross-sectional study of COVID-19 data reported to WHOAuss Abbood, Alexander Ullrich, and Luisa A DenkelBMJ Open, Jan 2023Objective Daily COVID-19 data reported by WHO may provide the basis for political ad hoc decisions including travel restrictions. Data reported by countries, however, are heterogeneous and metrics to evaluate its quality are scarce. In this work, we analysed COVID-19 case counts provided by WHO and developed tools to evaluate country-specific reporting behaviours.Methods In this retrospective cross-sectional study, COVID-19 data reported daily to WHO from 3 January 2020 until 14 June 2021 were analysed. We proposed the concepts of binary reporting rate and relative reporting behaviour and performed descriptive analyses for all countries with these metrics. We developed a score to evaluate the consistency of incidence and binary reporting rates. Further, we performed spectral clustering of the binary reporting rate and relative reporting behaviour to identify salient patterns in these metrics.Results Our final analysis included 222 countries and regions. Reporting scores varied between -0.17, indicating discrepancies between incidence and binary reporting rate, and 1.0 suggesting high consistency of these two metrics. Median reporting score for all countries was 0.71 (IQR 0.55–0.87). Descriptive analyses of the binary reporting rate and relative reporting behaviour showed constant reporting with a slight ‘weekend effect’ for most countries, while spectral clustering demonstrated that some countries had even more complex reporting patterns.Conclusion The majority of countries reported COVID-19 cases when they did have cases to report. The identification of a slight ‘weekend effect’ suggests that COVID-19 case counts reported in the middle of the week may represent the best data basis for political ad hoc decisions. A few countries, however, showed unusual or highly irregular reporting that might require more careful interpretation. Our score system and cluster analyses might be applied by epidemiologists advising policy makers to consider country-specific reporting behaviours in political ad hoc decisions.Data are available in a public, open access repository. Extra data can be accessed via the Dryad data repository at http://datadryad.org/withthedoi:10.5061/dryad.9s4mw6mmb and from GitHub at https://github.com/aauss/reporting_behavior.

2022

-

General Framework for Evaluating Outbreak Prediction, Detection, and Annotation AlgorithmsAuss Abbood, and Stéphane GhozzimedRxiv, Mar 2022

General Framework for Evaluating Outbreak Prediction, Detection, and Annotation AlgorithmsAuss Abbood, and Stéphane GhozzimedRxiv, Mar 2022The COVID-19 pandemic has highlighted and accelerated the use of algorithmic-decision support for public health. The latter’s potential impact and risk of bias and harm urgently call for scrutiny and evaluation standards. One example is the early detection of local infectious disease outbreaks. Whereas many statistical models have been proposed and disparate systems are routinely used, each tai-lored to specific data streams and use, no systematic evaluation strategy of their performance in a real-world context exists.One difficulty in evaluating outbreak prediction, detection, or annotation lies in the scales of different approaches: How to compare slow but fine-grained genetic clustering of individual samples with rapid but coarse anomaly detection based on aggregated syndromic reports? Or alarms generated for different, overlapping geographical regions or demographics?We propose a general, data-driven, user-centric framework for evaluating hetero-geneous outbreak algorithms. Discrete outbreak labels and case counts are defined on a custom data grid, associated target probabilities are then computed and compared with algorithm output. The latter is defined as discrete “signals” are generated for a number of grid cells (the finest available in the benchmarking data set) with different weights and prior outbreak information from which then estimated outbreak label probabilities are derived. The prediction performance is quantified through a series of metrics, including confusion matrix, regression scores, and mutual information. The dimensions of the data grid can be weighted by the user to reflect epidemiological criteria.

2021

-

Machine Learning for Health: Algorithm Auditing & Quality ControlLuis Oala, Andrew G.̱ Murchison, Pradeep Balachandran, and 36 more authorsJournal of Medical Systems, Nov 2021

Machine Learning for Health: Algorithm Auditing & Quality ControlLuis Oala, Andrew G.̱ Murchison, Pradeep Balachandran, and 36 more authorsJournal of Medical Systems, Nov 2021Developers proposing new machine learning for health (ML4H) tools often pledge to match or even surpass the performance of existing tools, yet the reality is usually more complicated. Reliable deployment of ML4H to the real world is challenging as examples from diabetic retinopathy or Covid-19 screening show. We envision an integrated framework of algorithm auditing and quality control that provides a path towards the effective and reliable application of ML systems in healthcare. In this editorial, we give a summary of ongoing work towards that vision and announce a call for participation to the special issue Machine Learning for Health: Algorithm Auditing & Quality Control in this journal to advance the practice of ML4H auditing.

2020

- PLOSCompBiol

EventEpi—A natural language processing framework for event-based surveillanceAuss Abbood, Alexander Ullrich, Rüdiger Busche, and 1 more authorPLOS Computational Biology, Nov 2020

EventEpi—A natural language processing framework for event-based surveillanceAuss Abbood, Alexander Ullrich, Rüdiger Busche, and 1 more authorPLOS Computational Biology, Nov 2020According to the World Health Organization (WHO), around 60% of all outbreaks are detected using informal sources. In many public health institutes, including the WHO and the Robert Koch Institute (RKI), dedicated groups of public health agents sift through numerous articles and newsletters to detect relevant events. This media screening is one important part of event-based surveillance (EBS). Reading the articles, discussing their relevance, and putting key information into a database is a time-consuming process. To support EBS, but also to gain insights into what makes an article and the event it describes relevant, we developed a natural language processing framework for automated information extraction and relevance scoring. First, we scraped relevant sources for EBS as done at the RKI (WHO Disease Outbreak News and ProMED) and automatically extracted the articles’ key data: disease, country, date, and confirmed-case count. For this, we performed named entity recognition in two steps: EpiTator, an open-source epidemiological annotation tool, suggested many different possibilities for each. We extracted the key country and disease using a heuristic with good results. We trained a naive Bayes classifier to find the key date and confirmed-case count, using the RKI’s EBS database as labels which performed modestly. Then, for relevance scoring, we defined two classes to which any article might belong: The article is relevant if it is in the EBS database and irrelevant otherwise. We compared the performance of different classifiers, using bag-of-words, document and word embeddings. The best classifier, a logistic regression, achieved a sensitivity of 0.82 and an index balanced accuracy of 0.61. Finally, we integrated these functionalities into a web application called EventEpi where relevant sources are automatically analyzed and put into a database. The user can also provide any URL or text, that will be analyzed in the same way and added to the database. Each of these steps could be improved, in particular with larger labeled datasets and fine-tuning of the learning algorithms. The overall framework, however, works already well and can be used in production, promising improvements in EBS. The source code and data are publicly available under open licenses.

-

EventEpi—A natural language processing framework for event-based surveillanceAuss Abbood, Alexander Ullrich, Rüdiger Busche, and 1 more authorJan 2020Applied Machine Learning Days

EventEpi—A natural language processing framework for event-based surveillanceAuss Abbood, Alexander Ullrich, Rüdiger Busche, and 1 more authorJan 2020Applied Machine Learning Days